Episode #192 – The Universe Demands Horns

A persistent knowledge structure can only take one shape. Information is physical, verification is asymmetric, and those two facts force a Gabriel’s horn topology on anything that wants to keep growing. The interesting move is to run the same test against artificial intelligence, since most of what the frontier labs are shipping is shaped like a cylinder dressed as a horn.

Episode Summary

The universe has a preference. Not in the sense that it has feelings or desires; in the sense that two physical facts force a single shape on anything that wants to persist. Information is physical, which means every bit you maintain against thermal noise carries a fuel bill. And verification is asymmetric: finding a valid answer is hard, checking one is cheap. That is P versus NP at the level of every substrate where knowledge gets generated, from quartz crystals to peer-reviewed mathematics. Put the two facts together and the question of what shape a durable structure can take becomes a geometry problem. Three answers are available, and only one of them works.

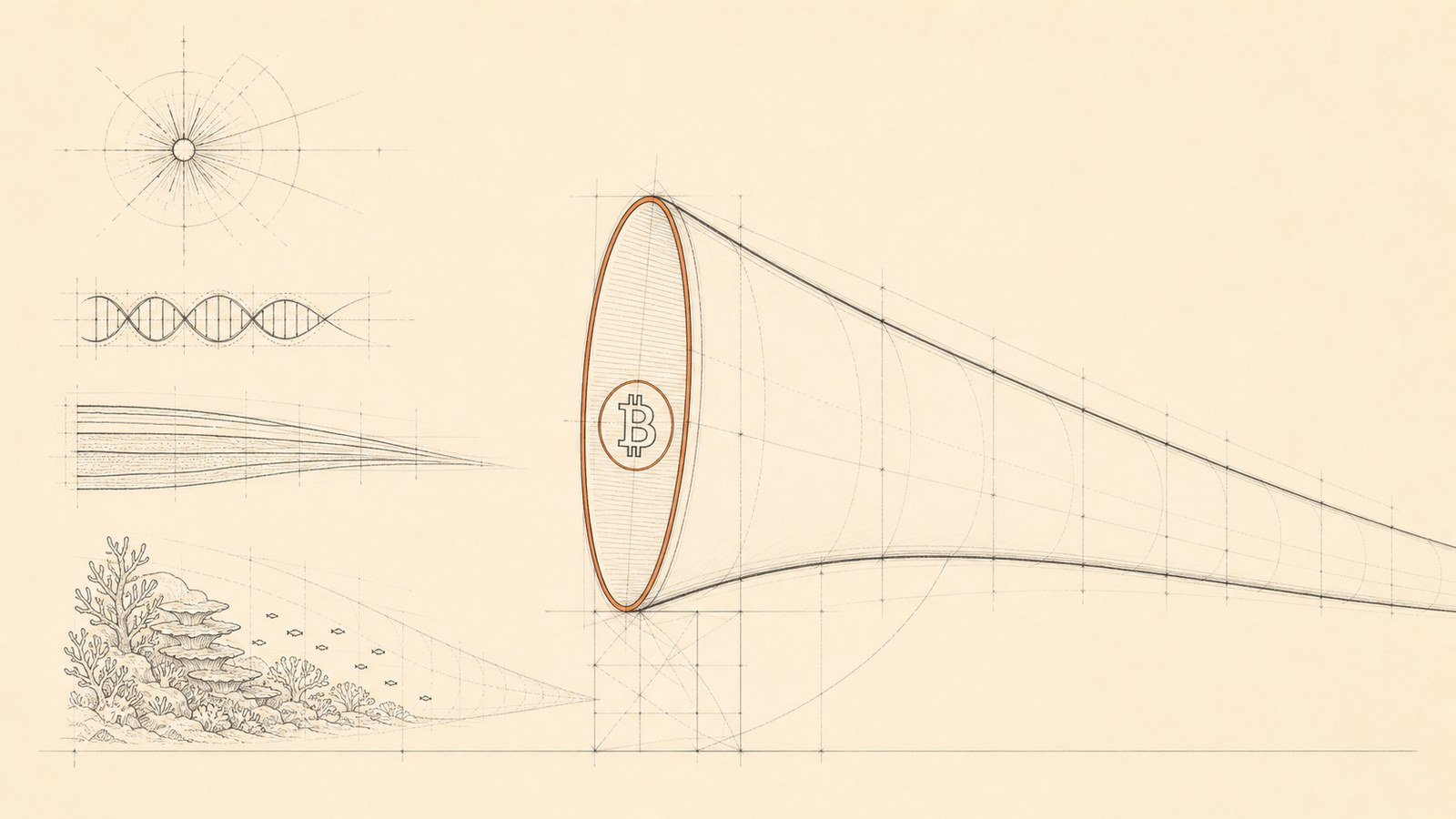

A cylinder grows its interior forever. The specification keeps expanding, new rules and edge cases bolted on without anything ever removed, until nobody can verify whether anything is actually compliant. Any modern country’s tax code is a cylinder. The verification cost runs away, the structure pays for it in heat, and the heat is what kills it. A cone is the opposite failure mode. Volume and surface area both fix at the start; no new evidence can land. Religious texts carved in stone are cones. So was the Soviet five-year plan, which is why the moment reality moved past it the system had no way to integrate the change. That leaves the horn, which is Gabriel’s: finite interior, unbounded boundary. Verification stays cheap because the rule book is small, and the boundary keeps growing forever because every new tick gets checked against the same lean specification. Coral reefs are horns. The biological rules that govern coral growth do not really change, and yet the reef accretes for thousands of years. Sediment layers are horns. The physics of compression is fixed, and the geological record keeps deepening. Crystals, stars, genomes, theorems, Bitcoin. Bitcoin is the first one humans have built on purpose.

The relevant equation, developed across the prior episodes, is K = Ic². Knowledge equals information times constraint quality squared. Information is the raw arrangement, the bits, the output, and it is cheap. Constraint is what you pay when content has been pressed against reality and survived. Information is easy; constraint is expensive; and the two get confused all of the time. That confusion is the Shannon trap. Claude Shannon’s 1948 information theory left meaning out on purpose, and the field has been forgetting that ever since. Now consider what a frontier lab is selling. Two things at once: a system that is smarter than every human alive, and a system that is going to produce real knowledge. For the pitch to hold, both have to be true. They contradict. A model smarter than every living human has no peer at its own scale, and constraint quality comes from peers. Anthropic, OpenAI, Google: their ultimate-knowledge-generator pitch is a cylinder dressed as a horn. When c is zero, K is zero no matter how much information the system produces.

The failure mode is the shape of Maxwell’s demon. Maxwell sketched a hypothetical creature that sorted molecules without paying any thermodynamic cost, and for a century the demon looked like it might be allowed. Rolf Landauer at IBM in 1961 proved it isn’t. Erasing a bit has an unavoidable energy floor, and the demon has to pay somewhere. A system that looks like it is sorting molecules for free is doing one of two things. Either it is paying the cost off-screen, or it is not actually sorting. Current large language models are the anti-demon: they appear to generate order at no cost, and the constraint is being paid by the humans annotating the outputs, the engineers shaping the prompts, the editors cleaning the final text before it ships. The labs at the source are running the Shannon trap at planetary scale, and the constraint they harvest from users is what makes the early outputs look like knowledge.

The dilution mechanism has a precise monetary analogue. Richard Cantillon, writing in the eighteenth century, observed that new money does not enter an economy evenly. It enters at specific points and ripples outward, benefiting the first holders before prices have adjusted and crushing the late ones who hold the same units once those units buy less. AI text is now running the Cantillon effect across the information substrate. The frontier labs are the central bank. Engineers and operators with direct API access are the early receivers; AI text functions as a productivity multiplier near the source because human attention is still paying the constraint. Out at the periphery the filter thins to nothing. Auto-generated articles flood the search results, bots reply to bots, customer service systems answer AI-formatted complaints with AI-formatted answers, and most of the substrate ends up as AI text being read by AI systems producing more AI text. John Boyd, the U.S. Air Force colonel who developed the OODA loop, named the slow step as orient: the moment a pilot interprets what he sees against his existing model of the world. AI text propagates faster than humans can finish orienting. The medium degrades from the gap between generation rate and verification rate, regardless of whether any individual output is wrong. Lyn Alden has made the same observation about gold and the telegraph in the nineteenth century: once you can send claims faster than the settlement layer can clear them, the settlement layer breaks.

Not every AI deployment is dying this way. There is a clean line through the technology between systems coupled to fast verifiers and systems coupled to slow social verifiers, and the first kind is producing real knowledge. Coding agents are coupled to a compiler. Code either compiles and runs or it does not, and the verifier runs at machine speed. AlphaFold is coupled to the protein-folding ground truth and to the experimental crystal-structure database. A predicted structure matches the wet-lab result or it does not. Robotics is coupled to physical reality. The grasp succeeds, the robot moves, the battery recharges, or none of those things happen. Drug discovery is coupled to the cell or the patient. A candidate compound binds the target at the predicted affinity or it does not make it past phase one. Where physical reality is verifying, AI generates real constraint. Where the only verifier is human attention or social consensus, AI generates slop. The economy is already sorting on exactly this line without naming what it is sorting on, which is why Anthropic’s revenue from coding products is growing faster than its revenue from the chat window. The substrate provides cheap, fast, structural verification; the model iterates inside the loop until the verifier passes; and the training signal is execution, not human preference.

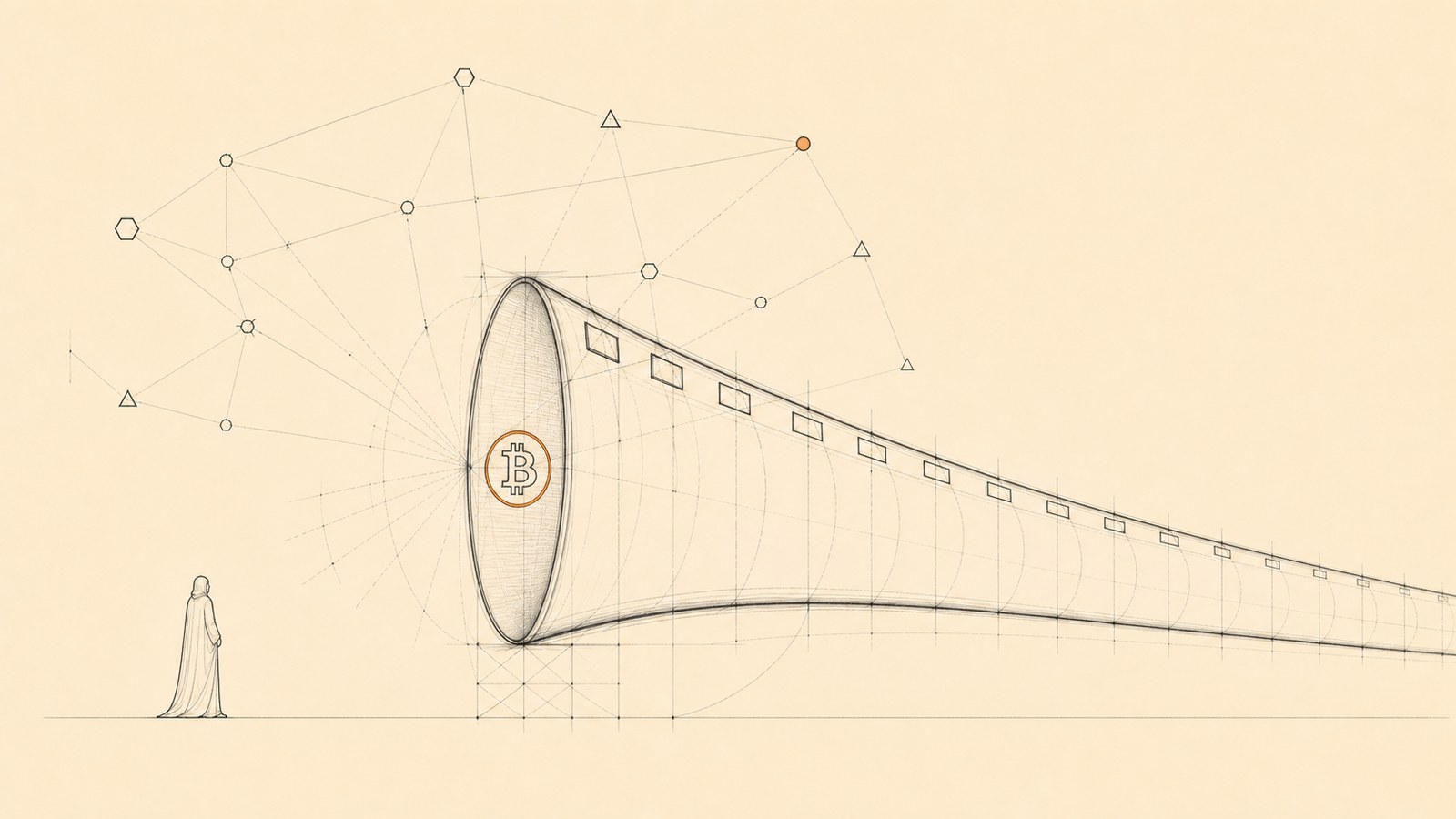

The cleaner sorting suggests a diagnostic. Four questions describe whether any system is actually generating knowledge or only emitting information. What is its verification surface? Does it have independent verifiers? Do verifications stack up over time? And is finding the answer harder than checking it? Bitcoin scores four out of four. The verification surface is every node on Earth running the rules continuously since 2009. The verifiers are independent across software clients, jurisdictions, incentive structures, hardware. Verifications have stacked one block at a time, ten minutes apart, for sixteen years. The asymmetry between mining and checking is something like a hundred billion to one. AlphaFold also scores four out of four for the same kind of reasons, applied to protein structure. The regular ChatGPT window scores zero out of four: the verifier is the user, who is usually not an expert; two queries on the same model are not two independent verifications; the model does not remember anything between conversations; and finding and checking cost the same, which is nothing. Friedrich Hayek named the underlying point in 1945. The extended order works because verification is cheap and the things being claimed are expensive, and distributed local verifiers paying constraint on their own decisions beat any central planner because no central planner can match the aggregate work. AI is reversing that asymmetry. Claim-making is becoming free; verification still requires real contact with reality. Roman Yampolskiy, on Peter McCormack’s show, has called for halting the global AI industry to avoid the catastrophe of an unaligned system. The horn framework points to a smaller and more durable move. Refuse to treat low-constraint output as knowledge. Build the verification substrate that tells the two apart. That substrate has a shape, and the shape is the one Bitcoin already runs at planetary scale.

Timestamps

[00:00] Cold open. The afterburner is on [04:09] The universe has a preference for horns [06:11] The biggest dilution event in the history of order generation [06:38] Frontier labs running the Shannon trap at planetary scale [08:19] The architecture that money needed is the architecture information needs [09:11] Two physical facts that force the shape [10:16] Three possible shapes for a persistent structure [11:29] The cylinder: tax code and the regulations that only ever grow [12:37] The cone: stone tablets and the Soviet five-year plan [13:55] The horn: coral reef, sediment, crystal, star, genome [16:25] Bitcoin as the first horn built on purpose [17:00] Where Yampolskiy’s case breaks: intelligence is not information output [18:16] K = Ic² recap: information is cheap, constraint is expensive [20:32] Anthropic, OpenAI, Google: cylinders dressed as horns [22:12] The anti-demon and Landauer’s 1961 floor [23:03] The Cantillon effect for information [26:50] John Boyd and the orient step of the OODA loop [28:46] The clean line: substrates with fast verifiers [29:43] Why Anthropic’s coding revenue is the tell [31:19] The Horn Test in four questions [32:08] Bitcoin scores four out of four [33:25] Information is going to get cheaper than free [34:24] Refuse low-constraint output as knowledge; the shape is Bitcoin’s

Timestamps are estimates.

Topics Discussed

- The two physical facts that force a Gabriel’s horn topology on any persistent knowledge structure

- Why the cylinder fails: unbounded interior growth and runaway verification cost, with the tax code as the worked example

- Why the cone fails: a frozen interior that cannot integrate new evidence, with religious texts and the Soviet five-year plan as worked examples

- The horn as the only shape that ratchets, with coral reefs, sediment, and crystals as physical instances and Bitcoin as the engineered one

- K = Ic² applied to large language models: when constraint quality is zero, the output is information without knowledge

- The anti-demon: a system that appears to generate order at no cost while the constraint is paid off-screen

- The Shannon trap at planetary scale, run by the frontier labs

- The Cantillon effect for information, with the labs as the central bank and the periphery as the late receivers

- John Boyd’s OODA loop and why AI text propagates faster than human verification can close

- The Horn Test: four questions that score any candidate knowledge-generating system

- Why coding agents, AlphaFold, drug discovery, and robotics generate real knowledge while the ChatGPT window does not

- Hayek’s extended order, the asymmetry between cheap verification and expensive claims, and what reverses when AI flips the asymmetry

Links & References

- Claude E. Shannon, “A Mathematical Theory of Communication” (Bell System Technical Journal, 1948)

- Rolf Landauer, “Irreversibility and Heat Generation in the Computing Process” (IBM Journal of Research and Development, 1961)

- James Clerk Maxwell, Theory of Heat (Longmans, 1871) — the demon thought experiment in chapter 12

- Richard Cantillon, Essai sur la Nature du Commerce en Général (1755), Mises Institute edition

- Friedrich Hayek, “The Use of Knowledge in Society” (American Economic Review, 1945)

- Frans P. B. Osinga, Science, Strategy and War: The Strategic Theory of John Boyd (Routledge, 2007) — the source on the OODA loop

- John Jumper et al., “Highly accurate protein structure prediction with AlphaFold” (Nature, 2021)

- Roman Yampolskiy, AI: Unexplainable, Unpredictable, Uncontrollable (CRC Press, 2024)

- Lyn Alden, “What Is Money, Anyway?” (lynalden.com)

- Satoshi Nakamoto, Bitcoin: A Peer-to-Peer Electronic Cash System (2008)

Related Episodes

- Episode #189 – Gabriel’s Horn: The Shape of Everything: the universal blueprint for every structure that creates, stores, or processes knowledge.

- Episode #190 – Time: time as the ordered accumulation of irreversible state transitions inside a constraint structure.

- Episode #191 – Why The Search Has To Be Expensive: P versus NP as the asymmetry that makes the horn possible.

- Episode #186 – The Anti-Demon: the first statement of K = Ic² and the framework this episode runs on top of.

Notable Pull Quotes

“The architecture that money needed is the architecture that information needs now.”

“We are living through the biggest dilution event in the history of information, in the history of order generation.”

“They are running the Shannon trap at basically planetary scale.”

“Bitcoin is the first one that humans built on purpose.”

“The shape is the same shape that Bitcoin has.”

Post a comment: